Overview and implementation of Logistic Regression analysis.

%matplotlib inline

import matplotlib

import matplotlib.pyplot as plt

import numpy as np

from regression__utils import *

where:

$$ \large \theta^Tx= \begin{bmatrix} \theta_0 \\ \theta_1 \\ \vdots \\ \theta_i \end{bmatrix} \begin{bmatrix} 1 & x_{11} & \cdots & x_{1i} \\ 1 & x_{21} & \cdots & x_{2i} \\ \vdots & \vdots & \ddots & \vdots \\ 1 & x_{n1} & \cdots & x_{ni} \end{bmatrix} $$where:

def arraycast(f):

'''

Decorator for vectors and matrices cast

'''

def wrap(self, *X, y=[]):

X = np.array(X)

X = np.insert(X.T, 0, 1, 1)

if list(y):

y = np.array(y)[np.newaxis]

return f(self, X, y)

return f(self, X)

return wrap

class logisticRegression(object):

def __init__(self, rate=0.001, iters=1024):

self._rate = rate

self._iters = iters

self._theta = None

@property

def theta(self):

return self._theta

def _sigmoid(self, Z):

return 1/(1 + np.exp(-Z))

def _dsigmoid(self, Z):

return self._sigmoid(Z)*(1 - self._sigmoid(Z))

@arraycast

def fit(self, X, y=[]):

self._theta = np.ones((1, X.shape[-1]))

for i in range(self._iters):

thetaTx = np.dot(X, self._theta.T)

h = self._sigmoid(thetaTx)

delta = h - y.T

grad = np.dot(X.T, delta).T

self._theta -= grad*self._rate

@arraycast

def pred(self, x):

return self._sigmoid(np.dot(x, self._theta.T)) > 0.5

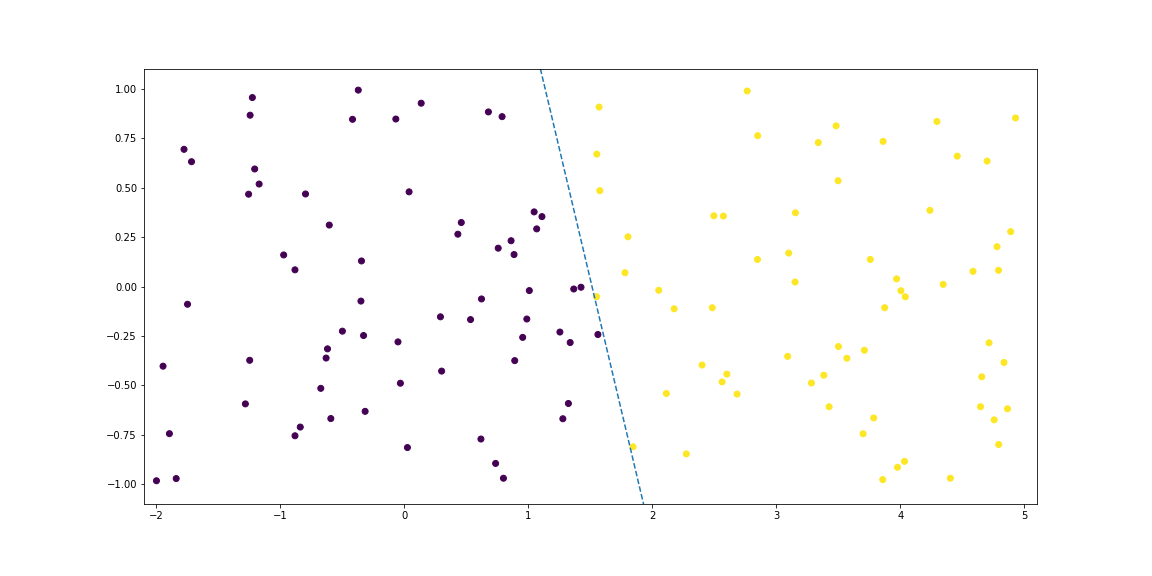

# Synthetic data 5

x1, x2, y = synthData5()

%%time

# Training

rlogb = logisticRegression(rate=0.001, iters=512)

rlogb.fit(x1, x2, y=y)

rlogb.pred(x1, x2)

Wall time: 52.9 ms

To find the boundary line components:

$$ \large \theta_0+\theta_1 x_1+\theta_2 x_2=0 $$Considering $\large x_2$ as the dependent variable:

$$ \large x_2=-\frac{\theta_0+\theta_1 x_1}{\theta_2} $$# Prediction

w0, w1, w2 = rlogb.theta[0]

f = lambda x: - (w0 + w1*x)/w2